Production AI agents are no longer just chat interfaces.

They retrieve context, call tools, update records, trigger workflows, write code, summarize customer history, query databases, and make recommendations that influence business decisions.

That changes the engineering model.

A chatbot answers. A production agent acts.

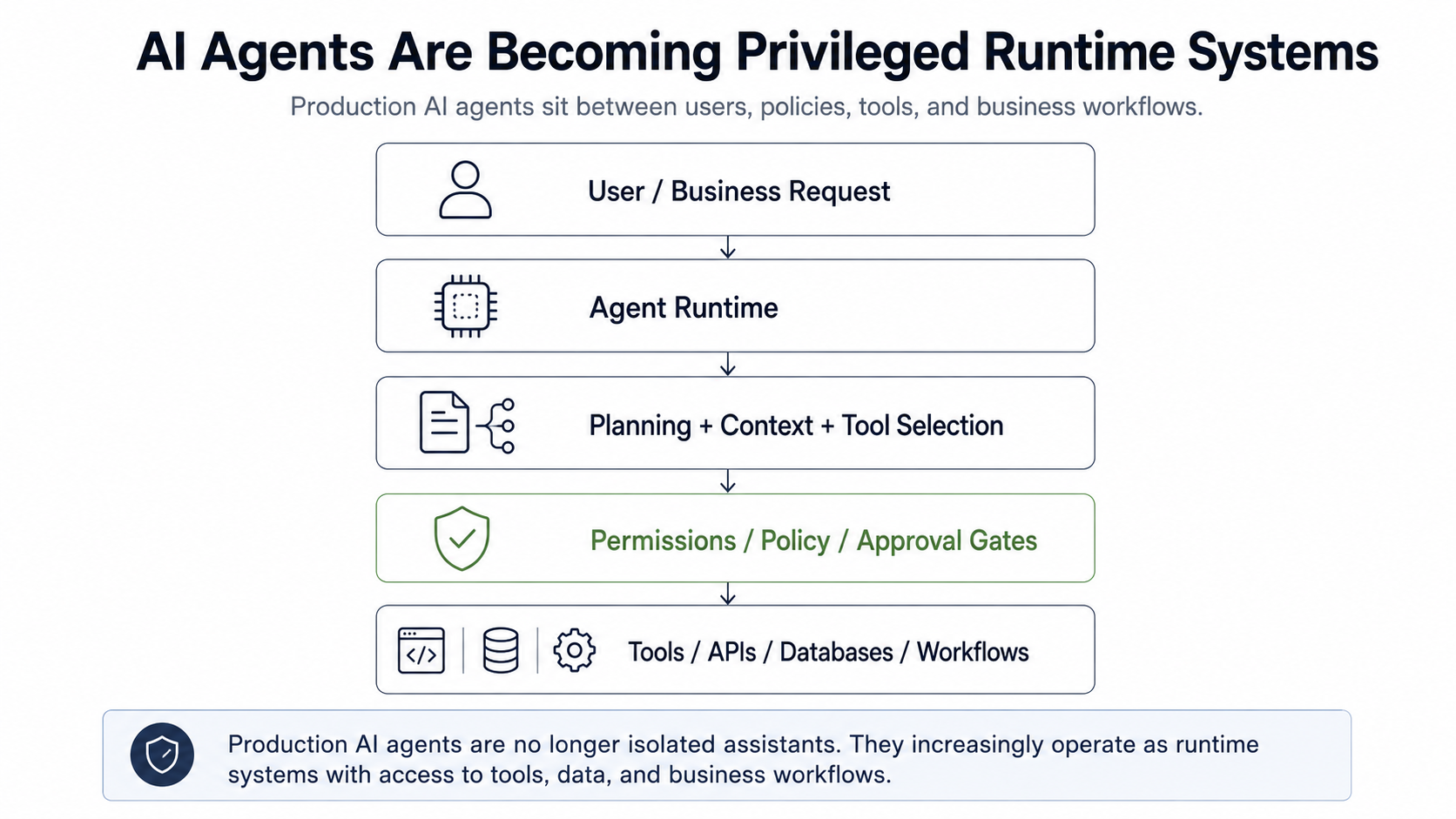

And once an AI system can act across tools, data, APIs, and workflows, it becomes something more serious than a user interface. It becomes a privileged runtime layer.

In my previous post, The Invisible Failures of Production AI Agents: Why Observability Is the Critical Systems Layer, I argued that production agents need observability because many agent failures do not appear as traditional software failures.

Observability answers one question:

What happened during the agent run?

Governance asks the next question:

Was the agent allowed to do what it did?

That is where production agent architecture becomes more difficult.

Figure 1: Production AI agents increasingly sit between users, tools, data, policies, and business workflows. That makes them runtime systems, not just chat interfaces.

The Shift: From Assistant to Actor

Most early AI assistants were low-risk because they were mostly passive.

They generated text, answered questions, and helped users think.

Modern agents are different.

They can:

- retrieve private or business-critical context

- call external tools

- write to operational systems

- trigger customer-facing workflows

- interact with databases

- coordinate with other agents

- maintain memory across sessions

This is not just a product shift. It is an architectural shift.

Protocols such as the Model Context Protocol are making it easier for LLM applications to connect with external tools, resources, and prompts. That connectivity is useful. It is also what turns an AI assistant into an actor inside a broader software environment.

The risk is no longer only that the model gives the wrong answer.

The risk is that the system takes a valid-looking action under the wrong authority, with the wrong context, at the wrong time.

That is a production architecture problem.

The Enterprise Signal

The market is already moving in this direction.

Enterprise platforms are no longer treating agents as isolated chatbots. Google describes Gemini Enterprise agents as part of a platform that gives organizations centralized visibility, control, deployment, and governance over agents created by Google, third parties, and internal teams.

That does not mean every enterprise agent deployment is mature.

It means the direction is clear.

The agent stack is expanding from:

Model + Prompt

to:

Model + Context + Tools + Identity + Policies + Observability + Governance

This is the difference between an impressive demo and a production system.

The demo focuses on task completion.

The production system focuses on controlled execution.

Why Traditional Permission Models Break Down

Traditional software usually has clear execution paths.

A user clicks a button, the application checks permissions, and the system performs a predefined action.

Agents introduce a different pattern.

The user may ask for an outcome, but the agent decides the intermediate steps. It may retrieve context, select tools, transform data, retry failed operations, escalate to another system, or make intermediate assumptions that shape the final action.

That means governance can no longer happen only at the edge of the application.

It has to happen throughout the agent runtime.

A production agent needs controls around:

- who requested the action

- which agent is acting

- what tools the agent can access

- what data the agent can retrieve

- which actions require approval

- what policies apply at each step

- what evidence must be recorded

- how the action can be audited later

This aligns with the broader direction of NIST's AI Risk Management Framework, which treats governance as a cross-cutting function across the AI lifecycle, not as a one-time launch review.

Without these controls, the organization does not have governed automation.

It has delegated authority without a reliable control plane.

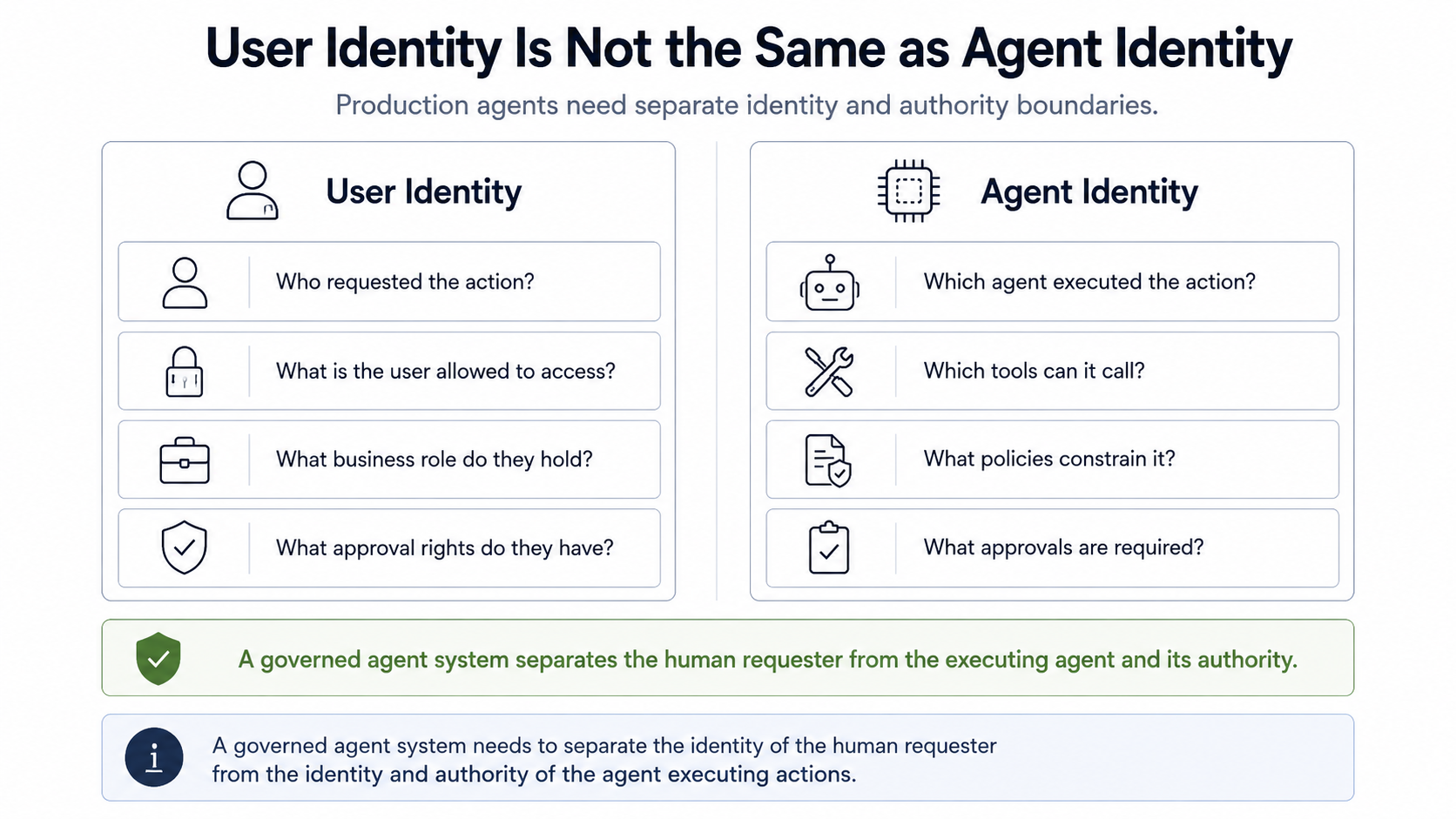

The Identity Problem

One of the most under-discussed issues in production AI agents is identity.

When an agent takes an action, whose authority is it using?

Is it using the user’s authority, the application’s authority, the agent’s authority, the service account’s authority, or the workflow owner’s authority?

This distinction matters.

A user may be allowed to view a customer record but not modify billing data. An agent may be allowed to summarize the record but not send an email. A workflow may be allowed to draft a refund recommendation but not approve the refund. A support agent may be allowed to call a CRM tool but not access payroll, legal, or security systems.

If all of those actions happen through one broad service account, the system becomes difficult to govern.

This is not a theoretical concern. OWASP's Excessive Agency guidance calls out the risk of LLM extensions using generic high-privilege identities and recommends executing actions in the specific user's context with the minimum required scope.

A mature agent architecture needs separation between:

- User identity: Who requested the action?

- Agent identity: Which agent executed the action?

- Tool identity: Which integration performed the operation?

- Workflow identity: Which business process authorized the action?

- Policy identity: Which rule allowed, blocked, escalated, or modified the action?

This is not bureaucracy.

It is how organizations preserve accountability when software systems become more autonomous.

Figure 2: A governed agent system needs to separate the human requester, the agent runtime, the tool integration, and the policy decision that authorized the action.

Least Privilege Becomes More Important, Not Less

Agents should not receive broad tool access just because they are useful.

- A customer-support agent does not need database-wide write access.

- A finance assistant does not need unrestricted email privileges.

- A code-generation agent does not need production deployment rights by default.

- A research agent does not need access to every internal document.

The principle should be simple:

An agent should have the minimum capability required for the current task, for the minimum duration necessary, under the appropriate policy constraints.

This is the agentic version of least privilege.

Instead of asking, “Can this agent use tools?” teams should ask:

- Which tools?

- For which users?

- Under which conditions?

- With what input constraints?

- With what output validation?

- With what approval requirements?

- With what audit trail?

- With what rollback plan?

This is where many agent prototypes break when they move toward production.

They were built for usefulness first and authority second.

In production systems, authority has to be designed from the beginning.

Tool Access Expands the Attack Surface

Tool use is what makes agents valuable.

It is also what makes them risky.

Once an agent can call tools, the system is exposed to failure modes that do not look like ordinary application bugs:

- the agent selects the wrong tool

- the tool description is misleading

- retrieved context influences the agent toward unsafe behavior

- a prompt injection changes the agent’s interpretation of a task

- the agent passes malformed or risky parameters

- the tool succeeds technically but causes a business failure

- the action cannot be reconstructed later

This is why tool access should not be treated as a simple plugin layer.

It should be treated as a governed interface between probabilistic reasoning and deterministic systems.

OWASP's Prompt Injection guidance is especially relevant here. Indirect prompt injection can come from external content such as websites, documents, or files. In a tool-using agent, that manipulated context can influence not just what the model says, but what connected systems the agent tries to use.

Every important tool call should have:

- a defined schema

- a permission boundary

- input validation

- output validation

- policy checks

- approval logic for high-impact actions

- execution logging

- traceability to the requesting user and agent

The more powerful the tool, the stronger the governance boundary needs to be.

Approval Gates Are Not a Weakness

Some teams resist human approval because they think it makes agents less autonomous.

That is the wrong framing.

Approval gates are not a failure of autonomy. They are a way to safely allocate autonomy.

A mature production system should not treat every action the same.

- Low-risk actions can be automated.

- Medium-risk actions can be reviewed asynchronously.

- High-risk actions should require explicit approval.

- Irreversible actions should require stronger controls.

For example:

- An agent summarizing a support ticket may not need approval.

- An agent drafting a customer email may need review.

- An agent issuing a refund may need policy validation.

- An agent changing account permissions should require explicit authorization.

- An agent modifying production data should have strong controls and auditability.

This is consistent with OWASP's Excessive Agency mitigation guidance, which recommends human approval for high-impact actions and complete mediation so downstream systems validate requests against security policies.

The goal is not to block agents.

The goal is to match the level of autonomy to the level of risk.

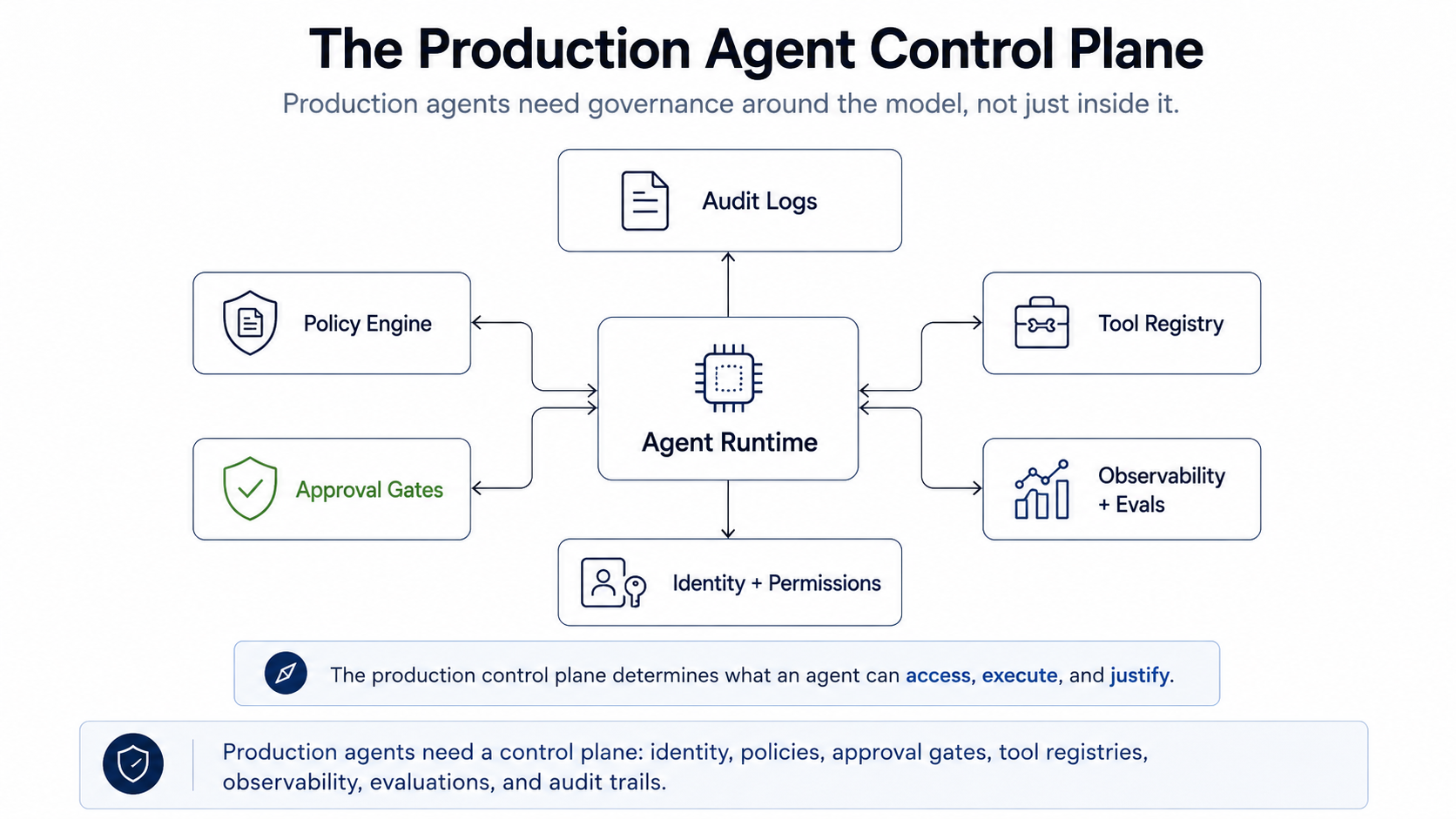

The Production Agent Control Plane

The emerging pattern is clear.

Production agents need a control plane around the model.

That control plane includes:

- Identity: Who is acting, on behalf of whom, through which system?

- Permissions: What can the agent access, call, modify, or trigger?

- Policies: What rules determine whether an action is allowed, blocked, escalated, or reviewed?

- Tool registry: Which tools exist, what they do, who owns them, and what risk level they carry?

- Observability: Can the organization reconstruct what happened during the agent run?

- Evaluations: Can the system measure whether the agent’s behavior was correct, grounded, safe, and useful?

- Approval workflows: Which actions require human judgment before execution?

- Auditability: Can the system explain who authorized an action, what evidence was used, and what outcome followed?

This is where agent observability connects directly to governance.

Observability gives the organization execution evidence.

Governance determines whether the execution should have been allowed.

Both are required for production AI agents.

Figure 3: Production agents need a control plane: identity, permissions, policies, approval gates, tool registries, observability, evaluations, and audit trails.

The Practical Checklist

Before deploying agents into real workflows, teams should ask:

- Does every agent have a distinct identity?

- Are agent permissions separate from user permissions?

- Are tools scoped by task, user, risk, and environment?

- Are high-impact actions routed through approval gates?

- Are tool calls logged with inputs, outputs, policies, and side-effect IDs?

- Can we reconstruct why an agent took an action?

- Can we revoke or reduce an agent’s authority quickly?

- Are retrieval permissions enforced before context reaches the model?

- Are sensitive actions evaluated before execution, not only after completion?

- Is there a clear owner for each agent, tool, and policy?

If the answer to most of these is no, the system is probably not ready for production autonomy.

It may still be useful.

But it should be treated as assisted automation, not trusted delegation.

What Changes for Engineering Teams

The practical implication is simple:

Agent engineering has to become closer to distributed systems engineering, security engineering, and platform engineering.

Teams need to design for:

- dynamic execution paths

- probabilistic tool selection

- scoped authority

- policy enforcement

- human approval

- runtime observability

- incident response

- controlled rollback

- auditability

This does not mean every agent needs heavyweight infrastructure.

A low-risk internal summarizer does not need the same governance stack as an agent that can issue refunds, update production data, or send customer-facing communications.

But the principle is the same:

The more authority an agent has, the stronger the control plane must be.

The Bottom Line

The next phase of AI agents will not be defined only by better models.

It will be defined by better runtime control.

As agents gain access to tools, data, workflows, and business processes, they become part of the operational fabric of the organization. That makes identity, permissions, policy enforcement, approval gates, observability, and auditability core infrastructure.

The question is no longer only:

Can the agent complete the task?

The better question is:

Can the agent complete the task under the right authority, with the right constraints, while leaving behind enough evidence to trust and audit the outcome?

That is the standard production AI agents need to meet.

Anything less is not autonomy.

It is unmanaged execution.

Sources

- clype, The Invisible Failures of Production AI Agents: Why Observability Is the Critical Systems Layer

- Model Context Protocol, Specification

- OWASP GenAI Security Project, LLM06:2025 Excessive Agency

- OWASP GenAI Security Project, LLM01:2025 Prompt Injection

- NIST AI Risk Management Framework Core

- NIST SP 800-53 Rev. 5, Security and Privacy Controls for Information Systems and Organizations

- NIST SP 800-207, Zero Trust Architecture

- Google Cloud, Gemini Enterprise Agents

- OpenTelemetry, Semantic Conventions for Generative AI Spans